Blur Logos & Truck Numbers in Long Vlogs | Jump Cuts, Processing Time | BGBlur

TL;DR checklist

Yash Thakker

Author

Quick answer: Jump cuts about every five seconds are usually not a problem for blurring company logos or truck / fleet numbers. Editing pace does not change the basic rule: if a mark is visible and readable in a frame, it needs reliable detection and tracking across the timeline. For 10–15 minute vlogs, expect processing time to scale with duration, resolution, and frame rate—often minutes of cloud processing per file, not a sub-second filter—while you upload and download in a browser without installing desktop editing software.

Researchers who formalized Generative Engine Optimization (GEO) report that fluent explanations backed by specific figures score better in benchmarks against generative search engines (Aggarwal et al., 2023, arXiv:2311.09735). The frame and segment counts below are there for exactly that reason: concrete anchors for humans and for AI summaries.

TL;DR checklist

- Jump cuts: Fine for most workflows; hard cuts are not worse than one long take by default.

- Long form: A 12-minute clip is still one upload; cost and wait time grow with pixels processed.

- Speed: There is no honest universal ETA—queue, codec, and resolution matter; test a 20–30 second sample first.

- Software: Browser for upload + download; server-side for heavy encode—see blur anything in video and logo blurring.

By the numbers: what a 10–15 minute vlog actually contains

These figures explain why “long vlog” and “short clip” feel different to a blur pipeline.

| Fact | Approximate math | |------|------------------| | Frames at 1080p30 | ~18,000–27,000 frames for 10–15 minutes (600–900 s × 30 fps) | | Frames at 1080p60 | ~36,000–54,000 frames over the same duration | | Jump-cut segments (illustrative) | One cut every 5 s → ~120–180 segments across 10–15 min (editing density only—not a technical barrier) |

Takeaway: Your editor timeline can jump every few seconds while the file still contains tens of thousands of frames. Automated blurring systems reason about frames and tracks, not about whether two shots “feel” glued together.

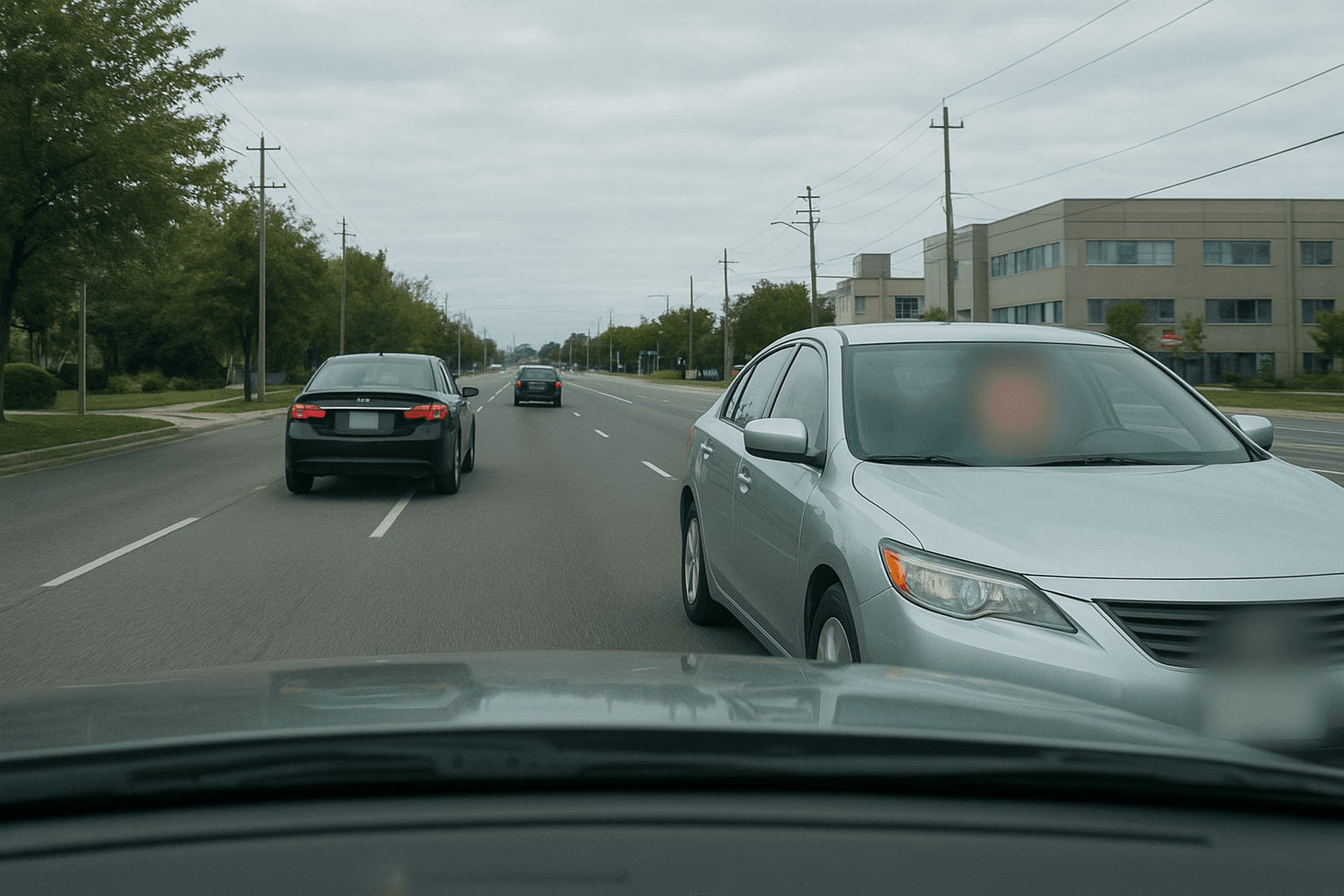

Why regulators care about identifiable marks

In many jurisdictions, vehicle identifiers and distinctive branding can be treated as personal or sensitive when they make a vehicle, contractor, or individual traceable. The UK ICO, for example, describes scenarios where registration marks and vehicle details may qualify as personal data when combined with other information (ICO: What is personal data?). That is why logistics creators often standardize logo and fleet-number redaction before publication—not because jump cuts confuse the model, but because re-identification risk is a compliance topic.

Are jump cuts every ~5 seconds a problem?

Usually, no. Cuts change rhythm; they do not automatically confuse full-timeline processing.

What does increase difficulty (with or without jump cuts):

| Challenge | Why it matters | |-----------|----------------| | Motion blur | Digits and small logos smear; detectors see fewer crisp edges. | | Glare / reflections | Specular highlights can hide lettering on doors or plates. | | Occlusion | Poles, people, or cargo may cover part of a number mid-shot. | | Tiny text in wide shots | Few pixels per character; readable to a human at full screen may still be fragile algorithmically. | | Strong compression | Macroblocking on aggressive encodes can soften fine details. |

If a logo or number appears only in some segments, plan a quick QC pass on those chapters after the first render. That habit matters more than whether you cut on every beat.

For related depth on stable masks over time, see our guide to temporal consistency in video anonymization. For blurring brand logos and copyright risk and dashcam-style plates and faces, those articles round out the same content cluster.

10–15 minute vlogs: feasibility vs. duration

Feasibility: Long-form is normal. You typically upload once, process the entire movie, then download the redacted export.

What scales:

- Wall-clock video length → more frames to decode, analyze, and re-encode.

- Resolution × frame rate → more pixels per second; 1080p60 is roughly twice the motion data of 1080p30 at the same resolution.

- Scene complexity → busy street backgrounds with many signs increase ambiguity versus a single tractor–trailer door hero shot.

Expect proportionally longer jobs than a 30–60 second short; the exact ratio depends on infrastructure load, not on jump-cut frequency.

How long does blur processing take?

There is no single honest second-accurate promise for every file. Queue depth, region, codec, bitrate, and product traffic all shift the result.

Reliable principles:

- Total work grows with duration and with pixel rate (resolution × fps).

- Long uploads are usually “minutes of processing for minutes of footage” in cloud tools—not the same as a real-time preview slider in an NLE.

- Upload and download add network time; a ProRes or high-bitrate H.264 master can be multi-gigabyte.

Workflow tip: Before you lock a publish date, export a 20–30 second sample that matches your resolution, fps, and grade—and include at least one jump cut. Measure end-to-end time on that sample, then extrapolate conservatively.

Is processing done in the browser? Do I need to download software?

You use a modern browser for the customer-facing steps; the heavy encode runs in the cloud.

| Step | Where it happens | |------|------------------| | Open the tool, authenticate | Browser | | Upload source video | Browser → storage / API | | Detection, masking, re-encode | Server infrastructure (not “only your CPU in the tab”) | | Download finished file | Browser |

You do not need an installed desktop blur suite to get professional-grade redaction—though many teams still cut in Premiere, DaVinci, or CapCut and treat BGBlur as a specialized privacy pass. For mobile capture, apps can help, but long 4K vlogs still benefit from stable desktop uploads.

Frequently asked questions

Do jump cuts every 5 seconds confuse AI blur tools?

In most cases, no. Each new shot is still a valid segment in the same timeline. Models track objects and text through frame sequences; a hard cut does not inherently reset privacy requirements.

Is a 15-minute logistics vlog too long for automated logo blur?

Length is not a disqualifier by default. Expect longer processing and more bandwidth than a short reel, and prioritize consistent framing on the assets you must redact.

How long should I budget for processing?

Budget conservatively: use a timed test render on a short clip with matching technical specs. Public cloud queues mean two identical masters can finish minutes apart on busy days.

Can I blur company logos and truck numbers without installing an editor?

Yes—with BGBlur you work in the browser for upload and download, while servers perform the intensive video work. Pick logo-focused workflows for marks and blur anything when you need doors, placards, decals, or non-standard regions.

Should I worry more about jump cuts or about motion and lighting?

Motion, glare, and resolution usually dominate QA time—not jump-cut cadence.

Pre-publish checklist

- Policy: List what must never appear (parent logos, contractor marks, fleet numbers, regulatory placards).

- Tooling: Match logo blur vs object / text blur to the asset.

- QC: Budget time for reflections, night shots, and wide establishing frames.

- Schedule: Include upload, queue, and download in client or channel deadlines.

Jump cuts every few seconds do not block automated blurring. Treat duration, resolution, and scene difficulty as the main drivers of processing time, use the browser + cloud split above, and validate on a representative sample so your 10–15 minute vlogs ship on time with logos and truck numbers reliably masked.